Why, When, and How to Make the Leap

Rapid reviews are workhorses. They get evidence into the hands of decision-makers fast in order to inform early strategic planning, identify evidence gaps, and shape the direction of subsequent research. Increasingly, they also leverage artificial intelligence (AI) to compress timelines even further, with tools assisting in search strategy development, screening, extraction, and reporting.

However, the questions that initially justified a rapid review rarely disappear. The evidence base continues to evolve, stakeholders request ongoing updates, and what began as a quick-turnaround deliverable is often extended far beyond its original scope. Likewise, the AI that enabled the rapid review does not become obsolete; instead, its value increases as the review expands. At the same time, both the use of AI and its implementation must be formalized to support sustained and scalable application.

This post walks through the rationale for converting rapid reviews into living evidence syntheses, the practical considerations involved (including how to handle AI integration responsibly), and how a platform like Nested Knowledge can help manage the transition.

Rapid Reviews Sit on a Methodological Continuum

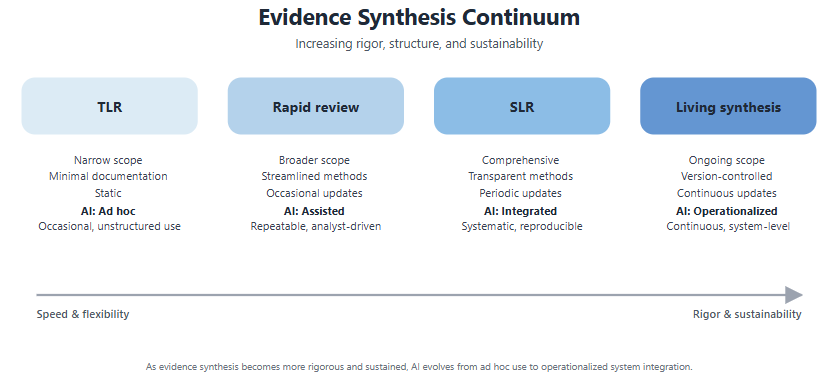

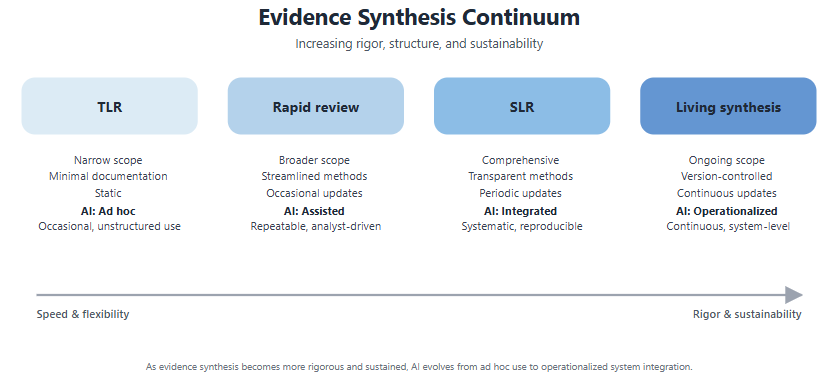

A “rapid review” means different things to different people, but in practice these reviews sit on a continuum between lightweight targeted literature reviews (TLRs) and full systematic literature reviews (SLRs), with living evidence synthesis as the natural next step.

Use of AI also maps naturally onto this continuum. At the TLR and rapid review end, teams tend to use AI freely with minimal documentation. As you move toward living synthesis, AI becomes more valuable for managing the operational burden of ongoing updates. However, it needs to be wrapped in validation, audit trails, and version-tracked methodology. The path to living synthesis isn’t about removing AI, it’s about formalizing how AI is used.

Rapid reviews are uniquely suited to be developed further into comprehensive and methodologically robust syntheses due to their SLR-like framework, but with a faster, less rigorous execution. This has been common practice in HEOR for years, but there is a growing recognition that the end state shouldn’t just be a static SLR; it should be a living synthesis that’s continuously maintained and updated, with AI transparently integrated at every stage.

An Evolving Guidance Landscape for AI in Evidence Synthesis

Before getting into the mechanics of upgrading a review, it’s worth understanding the current state of guidance around AI use in evidence synthesis, because this shapes what “responsible” looks like at each point on the continuum.

In recent years, efforts to clarify best practices for responsible AI integration in evidence synthesis have led to the development of comprehensive frameworks such as the RAISE (Responsible AI in Evidence Synthesis) initiative. This collaboration across 30+ organizations has produced a three-paper framework covering recommendations for practice (RAISE 1), guidance on building and evaluating AI tools (RAISE 2), and guidance on selecting and using AI tools in specific syntheses (RAISE 3). Cochrane, the Campbell Collaboration, Joanna Briggs Institute (JBI), and the Collaboration for Environmental Evidence published a joint position statement in 2025 endorsing the RAISE framework and establishing that AI use must not compromise methodological rigor; it requires human oversight and must be transparently reported.

Guidance around use of AI in literature reviews generating evidence to support submissions to Health Technology Assessment (HTA) bodies is much thinner. The National Institute for Health and Care Excellence (NICE) published the first HTA-specific position statement on AI in evidence generation in 2024, outlining expectations around transparency, validation, human oversight, and the principle that AI should augment rather than replace human involvement. CDA-AMC (Canada’s Drug Agency) followed in 2025 with a similar statement modeled on the NICE approach. Beyond these two agencies, most HTA bodies have not yet issued formal guidance on AI use in evidence submissions.

This creates a practical tension: methodological best practice (via RAISE) sets a high bar for AI transparency and documentation, while most HTA agencies haven’t yet specified what they expect. For teams converting rapid reviews into submission-ready syntheses, the safest approach is to build documentation and validation into AI-assisted workflows from the outset, rather than hoping requirements will remain minimal.

Why Convert? Three Common Scenarios

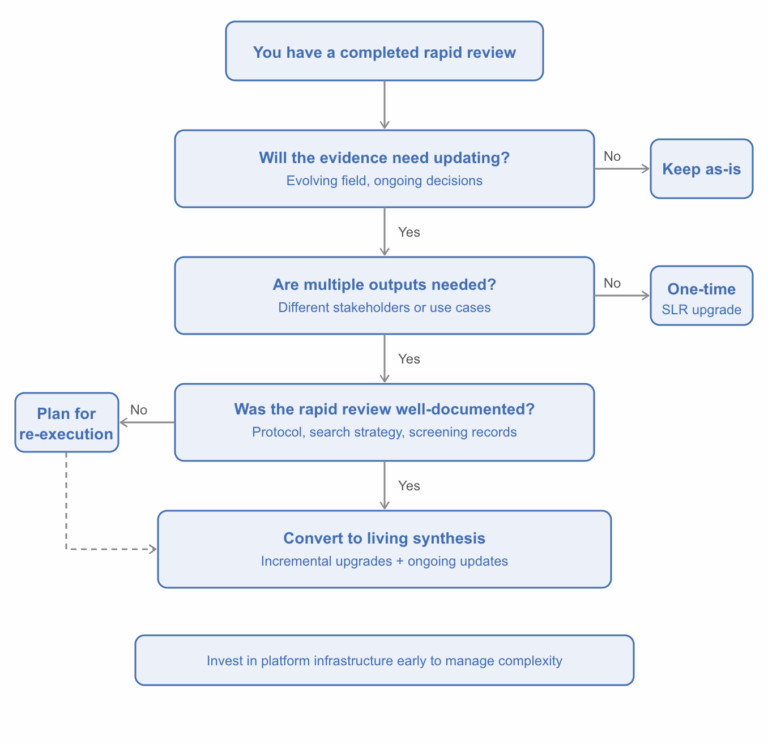

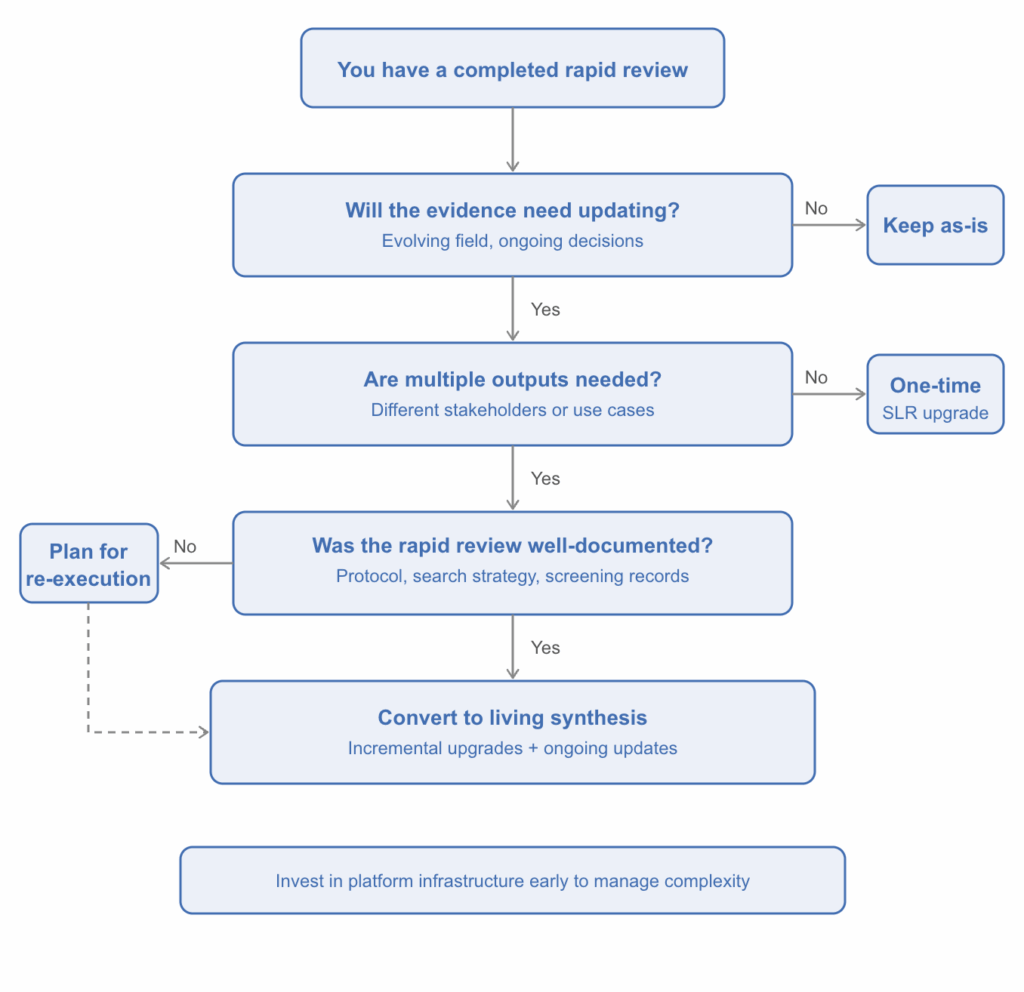

Not every rapid review needs to become a living synthesis. The decision flowchart below can help you think through whether the conversion makes sense for your situation.

When the answer is yes, the rationale usually stems from one of a few recurring situations:

- The evidence matures and the original review can’t keep up. Consider a scenario where a rapid review was conducted in a fast-moving therapeutic area, perhaps during the early stages of a disease outbreak or following an initial product launch. Early evidence might be dominated by case series, preprints, and preliminary data. As the field matures, that original review no longer reflects the current evidence base. A structured, living approach ensures the synthesis evolves alongside the evidence.

- Stakeholders need ongoing, up-to-date evidence across multiple use cases. A rapid review conducted to inform early cost-effectiveness modeling, for instance, may later need to support regulatory submissions, value dossier development, or clinical guideline input across different geographies. A living synthesis can serve as a single master evidence base that supports multiple downstream outputs without duplicating effort.

- The competitive or regulatory landscape shifts. New comparators emerge, treatment guidelines change, or submission requirements evolve. A static review can’t accommodate these shifts without being essentially re-done. A living framework absorbs new evidence incrementally and adapts to changing requirements over time.

What Actually Changes When You Convert?

Converting a rapid review isn’t just a matter of running more searches. The transition touches nearly every methodological dimension, from documentation and search strategy through to screening, extraction, quality assessment, and (critically) how AI is governed. Collectively, these methodological enhancements strengthen the credibility, reproducibility, and adaptability of the synthesis, allowing it to evolve in parallel with the expanding evidence base.

Table 1. Main methodological aspects that are modified when moving from a rapid review to a living synthesis.

|

Task |

Rapid review |

Living synthesis |

|

Protocol |

May be informal or absent |

Formal, version-controlled, predefined PICOS |

|

Search strategy |

1–2 databases, focused terms, pragmatic filters |

Multi-database, comprehensive, information specialist–reviewed |

|

Screening |

Single reviewer, possibly AI-assisted |

Dual screening or single + validation sample; AI audit trail |

|

Extraction |

Pragmatic, key outcomes only, possibly AI-assisted with partial human verification |

Structured forms, expanded scope, verified by second reviewer |

|

Quality assessment |

Often abbreviated or omitted |

Validated tools (e.g. Cochrane RoB 2.0, Drummond’s checklist) |

|

Update approach |

Ad-hoc or one-time update |

Scheduled or signal-based monitoring with defined cadence |

|

Version control |

Minimal tracking |

Locked snapshots per deliverable + evolving master review |

|

AI governance |

Tools used freely; documentation optional |

RAISE-aligned: tool versioning, validation rates, human oversight, and methods logged per cycle |

The most commonly underestimated area is search strategy. Expanding a pragmatic one- or two-database search into a comprehensive multi-database strategy often requires fundamental restructuring, not just adding terms. Engaging an information specialist at this stage, even if one wasn’t involved in the original review, is still recommended.

AI governance deserves equal attention. A rapid review might use AI-assisted screening with a brief note in the methods section. A living synthesis aligned with RAISE principles requires documenting the specific tools used (including version), how the AI was validated against human decisions, what performance thresholds were applied, and how AI-generated outputs were verified. If AI tools change between update cycles (as they inevitably will), the methodology needs to account for this transparently.

The Living Review Layer: Sustainability Matters

Making a review “living” adds another set of considerations on top of the initial conversion. The ongoing operational demands are real and shouldn’t be underestimated:

- Update cadence. Not every review benefits from fixed quarterly updates. In some fields, evidence accumulates slowly, and scheduled updates may yield nothing new. A signal-based monitoring approach, where formal updates are triggered by literature surveillance and the identification of relevant new evidence rather than a calendar date, can be more efficient.

- Version control. If the living review supports multiple outputs (e.g., submissions to different regulatory bodies on different timelines), you need a clear system for maintaining “locked” versions for specific deliverables alongside the evolving master review. Tracking which evidence, search dates, and methods apply to each output is critical and quickly becomes complex.

- AI consistency across update cycles. This is where the RAISE framework is particularly relevant. As AI tool capabilities evolve and models are updated, studies processed in earlier cycles may have been handled differently than those in later cycles. Maintaining a clear record of which AI tools, versions, and validation approaches were applied to which studies, and ensuring defensible consistency across the evidence base, is essential for both methodological integrity and potential HTA scrutiny.

How Nested Knowledge Supports the Rapid-to-Living Transition

Many of the challenges described above, from version control to AI governance and documentation, are fundamentally problems of workflow management and data provenance. Nested Knowledge was designed to address exactly these needs, and its AI capabilities are built into the platform in a way that supports transparent, auditable use by default.

- Updatable, integrated search. Nested Knowledge’s AutoLit module supports direct PubMed searching with updatable queries, as well as import from other databases, with automatic deduplication across sources. Search updates for a living review can be executed and tracked within the same platform where screening and extraction happen, rather than across disconnected tools.

- AI-assisted screening. The platform offers both a Robot Screener (inclusion prediction AI) and Smart Screener (LLM) capabilities, alongside configurable dual-screening workflows where AI serves as a second reviewer with human adjudication. Critically, this means AI-assisted screening decisions are captured within the same system that manages human review, making it straightforward to document concordance rates and adjudication outcomes in line with RAISE expectations.

- Smart extraction and critical appraisal. Adaptive Smart Tags and Smart Critical Appraisal tools can generate initial data extraction and quality assessment outputs that human reviewers then validate. For living reviews with recurring update cycles, this reduces the per-cycle workload while maintaining a human-verified evidence base.

- Structured tagging and hierarchy management. Nested Knowledge’s tagging hierarchy allows teams to organize and categorize evidence using structured, customizable frameworks. This is especially valuable when a living review needs to serve multiple outputs: the same underlying evidence can be tagged and filtered for different purposes without maintaining separate review databases.

- Nest versioning and archiving. Nested Knowledge allows teams to save and archive versions of a nest at defined points in time, and to create nest copies that function as subsets of the wider evidence base. This directly addresses one of the most persistent operational challenges in living reviews: maintaining locked snapshots for specific deliverables (such as an HTA submission tied to a particular search date) while continuing to update the master review. Rather than managing parallel spreadsheets or duplicating entire workflows manually, teams can branch from a single source of truth and preserve a clear lineage between the master evidence base and each downstream output.

- PRISMA and synthesis outputs. As records move through the workflow, Nested Knowledge auto-generates PRISMA diagrams and synthesis outputs (both qualitative and quantitative). For living reviews, this means reporting artifacts stay current with the evidence base rather than requiring manual reconstruction at each update.

- Audit trails and provenance tracking. Nested Knowledge logs individual screening decisions, extraction edits, and protocol changes with timestamps and user IDs across the entire review lifecycle. For living reviews that span multiple update cycles and team members, this level of granularity means every methodological decision is traceable, from who screened which record to when an extraction form was modified and by whom. This built-in provenance tracking supports RAISE-aligned transparency requirements, HTA submission documentation, and internal quality assurance without requiring teams to maintain separate audit logs.

What This Might Look Like in Practice

To make this concrete, here are a few illustrative scenarios where the rapid-to-living pathway plays out:

- Scenario 1: Burden of disease reviews in a fast-evolving area. A team conducts several parallel targeted reviews (epidemiology, clinical burden, economic burden) early in a product’s lifecycle. As the evidence matures and strategic needs expand, these are formalized into an integrated living synthesis with consistent eligibility criteria, expanded searches, and structured extraction forms, all maintained through quarterly refresh cycles. The living framework ensures the evidence base stays current as treatment landscapes shift and new data emerges.

- Scenario 2: Clinical efficacy review from early assessment to global submissions. An AI-assisted rapid review is conducted to support early go/no-go decisions and feasibility assessments. As the product advances, the review is upgraded with expanded searches, dual screening, and a modular structure that can accommodate varying requirements across geographies. AI screening decisions from the original review are retained and documented, with human validation layered on top to meet RAISE and emerging HTA expectations. The living approach keeps the evidence current through the submission process, with locked versions maintained for specific deliverables.

- Scenario 3: Economic evaluation review scaled from early modeling to multi-market submissions. A focused rapid review of published economic evaluations informs a preliminary cost-effectiveness model. As geographic scope expands, the review is upgraded with additional databases, quality assessment tools, and broader extraction. The team eventually shifts from fixed quarterly updates to a signal-based monitoring approach when evidence accumulation slows.

Key Takeaways

If you’re considering converting a rapid review to a living evidence synthesis, a few principles are worth keeping in mind:

- Start documenting early. Even under time constraints, recording your methods systematically during the rapid review pays dividends later. The less you have to reconstruct, the smoother the conversion. This applies doubly to AI: document the tools, versions, and validation approaches from day one.

- Plan for the upgrade before you need it. If there’s any chance a rapid review will need to be expanded later, make choices during the initial review that preserve optionality: structured eligibility criteria, traceable screening decisions, and reproducible search strategies.

- Invest in the right infrastructure. The operational complexity of a living review (version control, deduplication, multi-output management, team coordination, AI governance) is fundamentally a software and workflow problem. Tools like Nested Knowledge that are purpose-built for living evidence synthesis can dramatically reduce the friction involved.

- Formalize AI use early, not late. The guidance landscape is converging. RAISE, Cochrane, NICE, and CDA-AMC all point in the same direction: AI in evidence synthesis is welcome, but it must be transparent, validated, and documented. Teams that build these practices into their workflows from the rapid review stage will find the upgrade path far smoother than those who try to retrofit documentation after the fact.

Interested in learning more about how Nested Knowledge supports living evidence synthesis? Get in touch with the team or explore the platform documentation.

References:

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 1: Recommendations for practice. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 2: Building and evaluating AI evidence synthesis tools. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 3: Selecting and using AI evidence synthesis tools. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Flemyng E, Noel-Storr A, Macura B, Gartlehner G, Thomas J, Meerpohl JJ, Jordan Z, Minx J, Eisele-Metzger A, Hamel C, Jemioło P, Porritt K, Grainger M. Position statement on artificial intelligence (AI) use in evidence synthesis across Cochrane, the Campbell Collaboration, JBI, and the Collaboration for Environmental Evidence 2025. Cochrane Database of Systematic Reviews 2025, Issue 10. Art. No.: ED000178. DOI: 10.1002/14651858.ED000178.

- National Institute for Health and Care Excellence (NICE). Use of AI in evidence generation: NICE position statement. Corporate document ECD11. London: NICE; 15 August 2024. Available from: https://www.nice.org.uk/corporate/ecd11

Canada’s Drug Agency (CDA-AMC). Position statement on the use of artificial intelligence in the generation and reporting of evidence. Ottawa: Canada’s Drug Agency; April 2025. Available from: https://www.cda-amc.ca/sites/default/files/MG%20Methods/Position_Statement_AI_Renumbered.pdf

A blog about systematic literature reviews?

Yep, you read that right. We started making software for conducting systematic reviews because we like doing systematic reviews. And we bet you do too.

If you do, check out this featured post and come back often! We post all the time about best practices, new software features, and upcoming collaborations (that you can join!).

Better yet, subscribe to our blog, and get each new post straight to your inbox.

How Smart Study Type Tags Are Reinventing Evidence Synthesis

One of the features of Core Smart Tags is Smart Study Type – this refers to our AI system that automatically categorises the study type

Why, When, and How to Make the Leap

Rapid reviews are workhorses. They get evidence into the hands of decision-makers fast in order to inform early strategic planning, identify evidence gaps, and shape the direction of subsequent research. Increasingly, they also leverage artificial intelligence (AI) to compress timelines even further, with tools assisting in search strategy development, screening, extraction, and reporting.

However, the questions that initially justified a rapid review rarely disappear. The evidence base continues to evolve, stakeholders request ongoing updates, and what began as a quick-turnaround deliverable is often extended far beyond its original scope. Likewise, the AI that enabled the rapid review does not become obsolete; instead, its value increases as the review expands. At the same time, both the use of AI and its implementation must be formalized to support sustained and scalable application.

This post walks through the rationale for converting rapid reviews into living evidence syntheses, the practical considerations involved (including how to handle AI integration responsibly), and how a platform like Nested Knowledge can help manage the transition.

Rapid Reviews Sit on a Methodological Continuum

A “rapid review” means different things to different people, but in practice these reviews sit on a continuum between lightweight targeted literature reviews (TLRs) and full systematic literature reviews (SLRs), with living evidence synthesis as the natural next step.

Use of AI also maps naturally onto this continuum. At the TLR and rapid review end, teams tend to use AI freely with minimal documentation. As you move toward living synthesis, AI becomes more valuable for managing the operational burden of ongoing updates. However, it needs to be wrapped in validation, audit trails, and version-tracked methodology. The path to living synthesis isn’t about removing AI, it’s about formalizing how AI is used.

Figure 1. The methodological continuum from targeted literature review to living evidence synthesis.

Rapid reviews are uniquely suited to be developed further into comprehensive and methodologically robust syntheses due to their SLR-like framework, but with a faster, less rigorous execution. This has been common practice in HEOR for years, but there is a growing recognition that the end state shouldn’t just be a static SLR; it should be a living synthesis that’s continuously maintained and updated, with AI transparently integrated at every stage.

An Evolving Guidance Landscape for AI in Evidence Synthesis

Before getting into the mechanics of upgrading a review, it’s worth understanding the current state of guidance around AI use in evidence synthesis, because this shapes what “responsible” looks like at each point on the continuum.

In recent years, efforts to clarify best practices for responsible AI integration in evidence synthesis have led to the development of comprehensive frameworks such as the RAISE (Responsible AI in Evidence Synthesis) initiative. This collaboration across 30+ organizations has produced a three-paper framework covering recommendations for practice (RAISE 1), guidance on building and evaluating AI tools (RAISE 2), and guidance on selecting and using AI tools in specific syntheses (RAISE 3). Cochrane, the Campbell Collaboration, Joanna Briggs Institute (JBI), and the Collaboration for Environmental Evidence published a joint position statement in 2025 endorsing the RAISE framework and establishing that AI use must not compromise methodological rigor; it requires human oversight and must be transparently reported.

Guidance around use of AI in literature reviews generating evidence to support submissions to Health Technology Assessment (HTA) bodies is much thinner. The National Institute for Health and Care Excellence (NICE) published the first HTA-specific position statement on AI in evidence generation in 2024, outlining expectations around transparency, validation, human oversight, and the principle that AI should augment rather than replace human involvement. CDA-AMC (Canada’s Drug Agency) followed in 2025 with a similar statement modeled on the NICE approach. Beyond these two agencies, most HTA bodies have not yet issued formal guidance on AI use in evidence submissions.

This creates a practical tension: methodological best practice (via RAISE) sets a high bar for AI transparency and documentation, while most HTA agencies haven’t yet specified what they expect. For teams converting rapid reviews into submission-ready syntheses, the safest approach is to build documentation and validation into AI-assisted workflows from the outset, rather than hoping requirements will remain minimal.

Why Convert? Three Common Scenarios

Not every rapid review needs to become a living synthesis. The decision flowchart below can help you think through whether the conversion makes sense for your situation.

Figure 2. Decision flowchart: should you convert your rapid review?

When the answer is yes, the rationale usually stems from one of a few recurring situations:

- The evidence matures and the original review can’t keep up. Consider a scenario where a rapid review was conducted in a fast-moving therapeutic area, perhaps during the early stages of a disease outbreak or following an initial product launch. Early evidence might be dominated by case series, preprints, and preliminary data. As the field matures, that original review no longer reflects the current evidence base. A structured, living approach ensures the synthesis evolves alongside the evidence.

- Stakeholders need ongoing, up-to-date evidence across multiple use cases. A rapid review conducted to inform early cost-effectiveness modeling, for instance, may later need to support regulatory submissions, value dossier development, or clinical guideline input across different geographies. A living synthesis can serve as a single master evidence base that supports multiple downstream outputs without duplicating effort.

- The competitive or regulatory landscape shifts. New comparators emerge, treatment guidelines change, or submission requirements evolve. A static review can’t accommodate these shifts without being essentially re-done. A living framework absorbs new evidence incrementally and adapts to changing requirements over time.

What Actually Changes When You Convert?

Converting a rapid review isn’t just a matter of running more searches. The transition touches nearly every methodological dimension, from documentation and search strategy through to screening, extraction, quality assessment, and (critically) how AI is governed. Collectively, these methodological enhancements strengthen the credibility, reproducibility, and adaptability of the synthesis, allowing it to evolve in parallel with the expanding evidence base.

Table 1. Main methodological aspects that are modified when moving from a rapid review to a living synthesis.

| Task | Rapid review | Living synthesis |

| Protocol | May be informal or absent | Formal, version-controlled, predefined PICOS |

| Search strategy | 1–2 databases, focused terms, pragmatic filters | Multi-database, comprehensive, information specialist–reviewed |

| Screening | Single reviewer, possibly AI-assisted | Dual screening or single + validation sample; AI audit trail |

| Extraction | Pragmatic, key outcomes only, possibly AI-assisted with partial human verification | Structured forms, expanded scope, verified by second reviewer |

| Quality assessment | Often abbreviated or omitted | Validated tools (e.g. Cochrane RoB 2.0, Drummond’s checklist) |

| Update approach | Ad-hoc or one-time update | Scheduled or signal-based monitoring with defined cadence |

| Version control | Minimal tracking | Locked snapshots per deliverable + evolving master review |

| AI governance | Tools used freely; documentation optional | RAISE-aligned: tool versioning, validation rates, human oversight, and methods logged per cycle |

The most commonly underestimated area is search strategy. Expanding a pragmatic one- or two-database search into a comprehensive multi-database strategy often requires fundamental restructuring, not just adding terms. Engaging an information specialist at this stage, even if one wasn’t involved in the original review, is still recommended.

AI governance deserves equal attention. A rapid review might use AI-assisted screening with a brief note in the methods section. A living synthesis aligned with RAISE principles requires documenting the specific tools used (including version), how the AI was validated against human decisions, what performance thresholds were applied, and how AI-generated outputs were verified. If AI tools change between update cycles (as they inevitably will), the methodology needs to account for this transparently.

The Living Review Layer: Sustainability Matters

Making a review “living” adds another set of considerations on top of the initial conversion. The ongoing operational demands are real and shouldn’t be underestimated:

- Update cadence. Not every review benefits from fixed quarterly updates. In some fields, evidence accumulates slowly, and scheduled updates may yield nothing new. A signal-based monitoring approach, where formal updates are triggered by literature surveillance and the identification of relevant new evidence rather than a calendar date, can be more efficient.

- Version control. If the living review supports multiple outputs (e.g., submissions to different regulatory bodies on different timelines), you need a clear system for maintaining “locked” versions for specific deliverables alongside the evolving master review. Tracking which evidence, search dates, and methods apply to each output is critical and quickly becomes complex.

- AI consistency across update cycles. This is where the RAISE framework is particularly relevant. As AI tool capabilities evolve and models are updated, studies processed in earlier cycles may have been handled differently than those in later cycles. Maintaining a clear record of which AI tools, versions, and validation approaches were applied to which studies, and ensuring defensible consistency across the evidence base, is essential for both methodological integrity and potential HTA scrutiny.

How Nested Knowledge Supports the Rapid-to-Living Transition

Many of the challenges described above, from version control to AI governance and documentation, are fundamentally problems of workflow management and data provenance. Nested Knowledge was designed to address exactly these needs, and its AI capabilities are built into the platform in a way that supports transparent, auditable use by default.

AI-assisted screening. The platform offers both a Robot Screener (inclusion prediction AI) and Smart Screener (LLM) capabilities, alongside configurable dual-screening workflows where AI serves as a second reviewer with human adjudication. Critically, this means AI-assisted screening decisions are captured within the same system that manages human review, making it straightforward to document concordance rates and adjudication outcomes in line with RAISE expectations.

Updatable, integrated search. Nested Knowledge’s AutoLit module supports direct PubMed searching with updatable queries, as well as import from other databases, with automatic deduplication across sources. Search updates for a living review can be executed and tracked within the same platform where screening and extraction happen, rather than across disconnected tools.

- Smart extraction and critical appraisal. Adaptive Smart Tags and Smart Critical Appraisal tools can generate initial data extraction and quality assessment outputs that human reviewers then validate. For living reviews with recurring update cycles, this reduces the per-cycle workload while maintaining a human-verified evidence base.

- Structured tagging and hierarchy management. Nested Knowledge’s tagging hierarchy allows teams to organize and categorize evidence using structured, customizable frameworks. This is especially valuable when a living review needs to serve multiple outputs: the same underlying evidence can be tagged and filtered for different purposes without maintaining separate review databases.

- Nest versioning and archiving. Nested Knowledge allows teams to save and archive versions of a nest at defined points in time, and to create nest copies that function as subsets of the wider evidence base. This directly addresses one of the most persistent operational challenges in living reviews: maintaining locked snapshots for specific deliverables (such as an HTA submission tied to a particular search date) while continuing to update the master review. Rather than managing parallel spreadsheets or duplicating entire workflows manually, teams can branch from a single source of truth and preserve a clear lineage between the master evidence base and each downstream output.

- PRISMA and synthesis outputs. As records move through the workflow, Nested Knowledge auto-generates PRISMA diagrams and synthesis outputs (both qualitative and quantitative). For living reviews, this means reporting artifacts stay current with the evidence base rather than requiring manual reconstruction at each update.

- Audit trails and provenance tracking. Nested Knowledge logs individual screening decisions, extraction edits, and protocol changes with timestamps and user IDs across the entire review lifecycle. For living reviews that span multiple update cycles and team members, this level of granularity means every methodological decision is traceable, from who screened which record to when an extraction form was modified and by whom. This built-in provenance tracking supports RAISE-aligned transparency requirements, HTA submission documentation, and internal quality assurance without requiring teams to maintain separate audit logs.

What This Might Look Like in Practice

To make this concrete, here are a few illustrative scenarios where the rapid-to-living pathway plays out:

- Scenario 1: Burden of disease reviews in a fast-evolving area. A team conducts several parallel targeted reviews (epidemiology, clinical burden, economic burden) early in a product’s lifecycle. As the evidence matures and strategic needs expand, these are formalized into an integrated living synthesis with consistent eligibility criteria, expanded searches, and structured extraction forms, all maintained through quarterly refresh cycles. The living framework ensures the evidence base stays current as treatment landscapes shift and new data emerges.

- Scenario 2: Clinical efficacy review from early assessment to global submissions. An AI-assisted rapid review is conducted to support early go/no-go decisions and feasibility assessments. As the product advances, the review is upgraded with expanded searches, dual screening, and a modular structure that can accommodate varying requirements across geographies. AI screening decisions from the original review are retained and documented, with human validation layered on top to meet RAISE and emerging HTA expectations. The living approach keeps the evidence current through the submission process, with locked versions maintained for specific deliverables.

- Scenario 3: Economic evaluation review scaled from early modeling to multi-market submissions. A focused rapid review of published economic evaluations informs a preliminary cost-effectiveness model. As geographic scope expands, the review is upgraded with additional databases, quality assessment tools, and broader extraction. The team eventually shifts from fixed quarterly updates to a signal-based monitoring approach when evidence accumulation slows.

Key Takeaways

If you’re considering converting a rapid review to a living evidence synthesis, a few principles are worth keeping in mind:

- Start documenting early. Even under time constraints, recording your methods systematically during the rapid review pays dividends later. The less you have to reconstruct, the smoother the conversion. This applies doubly to AI: document the tools, versions, and validation approaches from day one.

- Plan for the upgrade before you need it. If there’s any chance a rapid review will need to be expanded later, make choices during the initial review that preserve optionality: structured eligibility criteria, traceable screening decisions, and reproducible search strategies.

- Invest in the right infrastructure. The operational complexity of a living review (version control, deduplication, multi-output management, team coordination, AI governance) is fundamentally a software and workflow problem. Tools like Nested Knowledge that are purpose-built for living evidence synthesis can dramatically reduce the friction involved.

- Formalize AI use early, not late. The guidance landscape is converging. RAISE, Cochrane, NICE, and CDA-AMC all point in the same direction: AI in evidence synthesis is welcome, but it must be transparent, validated, and documented. Teams that build these practices into their workflows from the rapid review stage will find the upgrade path far smoother than those who try to retrofit documentation after the fact.

Interested in learning more about how Nested Knowledge supports living evidence synthesis? Get in touch with the team or explore the platform documentation.

References:

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 1: Recommendations for practice. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 2: Building and evaluating AI evidence synthesis tools. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Thomas J, Flemyng E, Noel-Storr A, Moy W, Marshall IJ, Hajji R, et al. Responsible use of AI in evidence SynthEsis (RAISE) 3: Selecting and using AI evidence synthesis tools. In: Open Science Framework. Washington DC: Center for Open Science; 2025 (updated March 2026). https://doi.org/10.17605/OSF.IO/FWAUD

- Flemyng E, Noel-Storr A, Macura B, Gartlehner G, Thomas J, Meerpohl JJ, Jordan Z, Minx J, Eisele-Metzger A, Hamel C, Jemioło P, Porritt K, Grainger M. Position statement on artificial intelligence (AI) use in evidence synthesis across Cochrane, the Campbell Collaboration, JBI, and the Collaboration for Environmental Evidence 2025. Cochrane Database of Systematic Reviews 2025, Issue 10. Art. No.: ED000178. DOI: 10.1002/14651858.ED000178.

- National Institute for Health and Care Excellence (NICE). Use of AI in evidence generation: NICE position statement. Corporate document ECD11. London: NICE; 15 August 2024. Available from: https://www.nice.org.uk/corporate/ecd11

Canada’s Drug Agency (CDA-AMC). Position statement on the use of artificial intelligence in the generation and reporting of evidence. Ottawa: Canada’s Drug Agency; April 2025. Available from: https://www.cda-amc.ca/sites/default/files/MG%20Methods/Position_Statement_AI_Renumbered.pdf